Search

Interpreting Neural Network Outputs Webinar

We’re sorry.

This event has ended.

See our upcoming events.

Friday 04 December 2020

01:00 PM - 02:00 PM

Location

The Centre for Data Science presents its latest webinar, 'Interpreting Neural Network Outputs'.

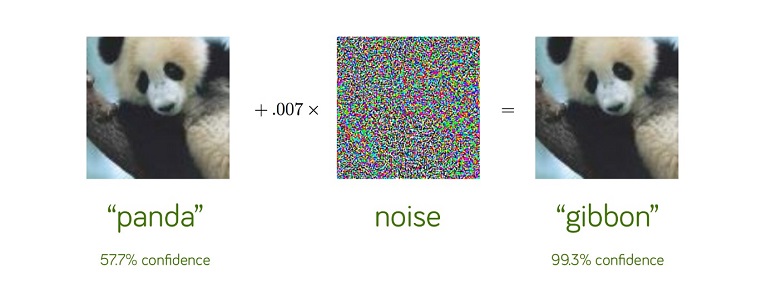

Why might a neural network mistake what clearly looks like a panda for a gibbon? Which input features and prototypes does a neural network consider most important in its predictions?

Dr Kojo Sarfo Gyamfi, Data Scientist at Loblaw Companies Ltd, Brampton, Canada and Honorary Research Fellow at Coventry University, will be presenting some of his recent research into extracting feature importance from a neural network. In particular, he will show how a deep network can be distilled into a shallower, radial basis function (RBF) network that can thus be more easily interpreted.

Dr Gyamfi was awarded his PhD in Computer Science (Machine Learning) at Coventry University in 2018. Following this he worked as a postdoctoral researcher on the H2020 DOMUS project investigating machine learning applied to thermal systems within vehicles. He went on to work as a machine learning specialist in a number of commercial companies in Canada. He was recently awarded an Honorary Research Fellowship at Coventry University.

If you are interested in joining this event, please email research.eec@coventry.ac.uk