Projects and Publications

Research in the NTDC will be primarily concerned with vehicles and infrastructure, strongly influenced by considerations from transport and mobility systems more widely.

There will be two meta-level research themes:

- The Inputs: Influences on future vehicle design – why will transport look different in the future and what will it offer?

- The Outputs:Articulation of design through improved physical and virtual tools – deve loping the processes and technologies of design and analysis.

The nature of research activities requires medium to longer-term commitments of 2 – 5 years typically, can involve multiple partners and are applicable to a number of companies, even across sectors. A good example might be empathic design to ensure vehicles of the future meet the needs of an ageing population. Research can also be sponsored by single companies.

Research projects

Biomechanics of luggage handling on moving vehicle

This research explores the biomechanics of luggage handling on moving vehicles. Using on-board accelerometers and GPS, the position, velocity and lateral/longitudinal accelerations of a wide variety of passenger vehicles have been recorded. Also the luggage handling postures have been for a range of subjects, luggage weights and shelving heights has been measured using 3-dimensional optical tracking methods. This research will provide information to the optimal design of luggage storage solutions and provide a novel solution especially for the elderly and infirm.

For further info contact James Shippen (aa2388@coventry.ac.uk)

Impact of visual appearance on the perceived comfort of automotive seats

Automotive seat comfort has become a major aspect in differentiation and customisation amongst competitors in a highly saturated automotive market. Unlike discomfort studies, this research investigates the multi-faceted concept of comfort. Based on initial findings that the mere change in visual appearance in otherwise physically identical seats dramatically shifts people’s perception of comfort, it explores the impact of specific design cues and underlying mechanisms. For further info contact Tugra Erol (erolt@uni.coventry.ac.uk)

Human-Centred Design of driverless pods

First and last mile mobility solutions are expected to become integral to future transport solutions. Current proposals focus on technological feasibility and user acceptance but tend to ignore basic user requirements. This project adopts a human-centric approach to the design of a driverless pod based on the passenger comfort experience model. The project is adopting a wide range of design tools to explore and evaluate the user experience. For further information contact Joscha Wasser (wasserj@uni.coventry.ac.uk)

Motion sickness in automated vehicles

The introduction of vehicle automation is predicated on the assumption that it will free up our time and allow us to engage in leisurely or economically-productive activities. Current design proposals conceptualise such future vehicles as “offices on wheels”. However, our research has shown that motion sickness will be a key issue due to conflicting motion cues as perceived by the passenger. Several projects investigate underlying mechanisms, motion sickness assessment methods, and design solutions. For further information contact Cyriel Diels (cyriel.diels@coventry.ac.uk)

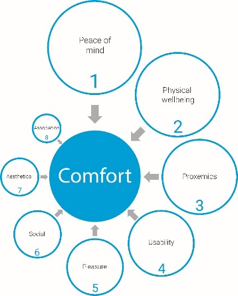

Passenger comfort experience in future vehicles

Automotive design and research is heavily biased towards the driver. With the advance of vehicle automation and shared mobility, however, it is the passenger experience that will take centre stage. Whereas at first sight this may not appear to be different to the experience in other modes of transport, it introduces different and novel psychological, physical and physiological challenges which are currently not well understood. The aim of this research is to identify and evaluate key comfort factors and their interrelatedness and develop a people centred model of the passenger comfort experience. The model assists in defining the research agenda and informs the design of safe, usable, comfortable, and desirable future mobility solutions. For further information contact Cyriel Diels (cyriel.diels@coventry.ac.uk)

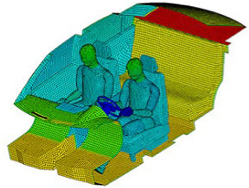

Thermal comfort perception in Electric Vehicles

As part of the EU-funded project DOMUS (Design and OptiMisation for efficient EVs based on a USer-centric approach), this research explores 1) the impact of task engagement (driver vs. passenger) on thermal comfort, 2) people’s mental models of cooling and heating and how Human Machine Interface (HMI) approaches may nudge occupants towards more effective and efficient HVAC use, and 3) the impact of vehicle interior form, shape, colour, and materials characteristics on our thermal comfort perception. For further information contact Cyriel Diels (cyriel.diels@coventry.ac.uk)

Compatibility between trust and non-driving related tasks in HMI design for automated driving

Acceptance of automated vehicles will depend on system trust and the ability to comfortably engage in non-driving related tasks (NDRT). This research explored a potential trade-off between the two. HMI designs compared different levels of intrusiveness of trust related information by adopting a single head up display versus a distributed display configuration. Driving simulator studies suggest a preference for co-locating trust information with NDRT content. For further information contact Cyriel Diels (cyriel.diels@coventry.ac.uk)

Relative importance of vehicle attributes

The aim of this research is to create a framework of how different vehicle attributes relate to different vehicle segments and consumers’ psychological and behavioural responses over time. Results suggest considerable differences across segments and customers but also the time of evaluation and suggests that vehicle evaluation and benchmarking protocols need to consider intricate participant screening and selection. For further information contact Milena Kukova (kukovam@uni.coventry.ac.uk)

Information expectations in highly and fully automated vehicles

Vehicle automation fundamentally changes the relationship with our vehicles whereby system trust and occupant comfort will become key user requirements. This research explored what information users expect to receive across a wide range of traffic scenarios. Results indicated that users expected the HMI to provide information to promote system transparency, comprehensibility, and predictability. Subsequent design workshops were conducted to develop HMI concepts which were developed into early prototypes. For further information contact Cyriel Diels (cyriel.diels@coventry.ac.uk)

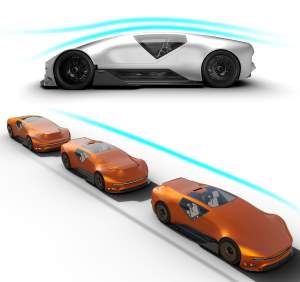

Platoon vehicles - Change shape of vehicles in terms of close proximity to other vehicles

The discussion of aerodynamics in terms of vehicle shapes and styles and its association with autonomous and connected vehicles is very limited to date. This project would look at how packaging and exterior design (styling) can facilitate, and even enhance, connected and autonomous vehicle design in respect of single-line and multi-lane platoon systems in order to ease high-speed congestion and contribute towards reduced energy consumption and emissions. This is based on the idea of being able to “intelligently” morph shapes according to position in the platoon. For further information contact Geoff Le Good (aa0877@coventry.ac.uk).

Design Contribution for Platooning of Connected and Autonomous Vehicles

The opportunity for energy savings for vehicles travelling in close proximity arises from the exploitation of airflow interference effects to produce reductions in overall aerodynamic drag. Vehicles will require high levels of connectivity and advanced control systems in order to achieve these drag reductions but of equal significance will be vehicle style since changes in drag are highly sensitive to changes in shape. This project aims to investigate the effects on the efficiency of platoon configurations for a variety of upper-body forms with the objective of understanding the contributions and opportunities for the design (Styling) of future vehicles. For further information contact (g.legood@coventry.ac.uk)

Error recovery strategies in conversational automotive Human Machine Interfaces

The application of conversational user interfaces (CUI) in vehicles shows promising advantages over the conventional interfaces due to its hands-free, eyes-on-the-road interactions owing to higher safety while driving. However, the currently available technology has its fair share of challenges w.r.t non-intuitive, command-based speech interfaces and poor speech recognition. This research investigates the ultimate acceptability and user experience of speech based systems for in-vehicle interactions, with the development of a more intuitive, naturalistic dialog structure between humans and their cars. The idea is to implement human error recovery strategies to resolve the problems of misrecognition and misunderstanding. This study uses an interactive prototype developed using Amazon’s Alexa Voice Service and IBM’s Watson cognitive computing services.

Publications & Press

- Technology and Legislation are changing our relationship with the car (Blog)

- Texting and driving: A conscious choice that can never be justified

- Stage is set for LEGO robot creatures to do battle in Coventry

- Future of car design debate

- Honorary doctorates for motor industry supremos

- Coventry's transport research team supports £7million connected driving project

- West Midlands MEP praises "extraordinary" economic impact of Coventry University

- AME boss named as ‘Manufacturing Exemplar’ for boosting industry skills

- Institute for Manufacturing and Engineering claims major innovation award win

- Construction starts on uni’s new transport design centre

- Announcement press release